Having reviewed the main climate myths, it was time to look at the unifying element of all these topics: climate models.

Climate models are important simulation tools for improving our understanding of climate behaviour on seasonal, annual, decadal and centennial time scales. Models can be used to determine the extent to which observed climate changes may be due to natural variability, human activity or a combination of both.

But how does a climate model work? Is it true that some people need super computers as big as the size of tennis courts? How have these models evolved and, more importantly, are they reliable? To answer this question, we received the help of Marie-Alice Foujols, a CNRS research engineer who was technical manager of the IPSL’s climate modelling unit until 2018.

Sommaire

What is a climate model?

Climate models are an underlying topic throughout the articles in our climate series. Indeed, all the research topics discussed have one thing in common: the use of climate models. First of all, let us define what a climate model is.

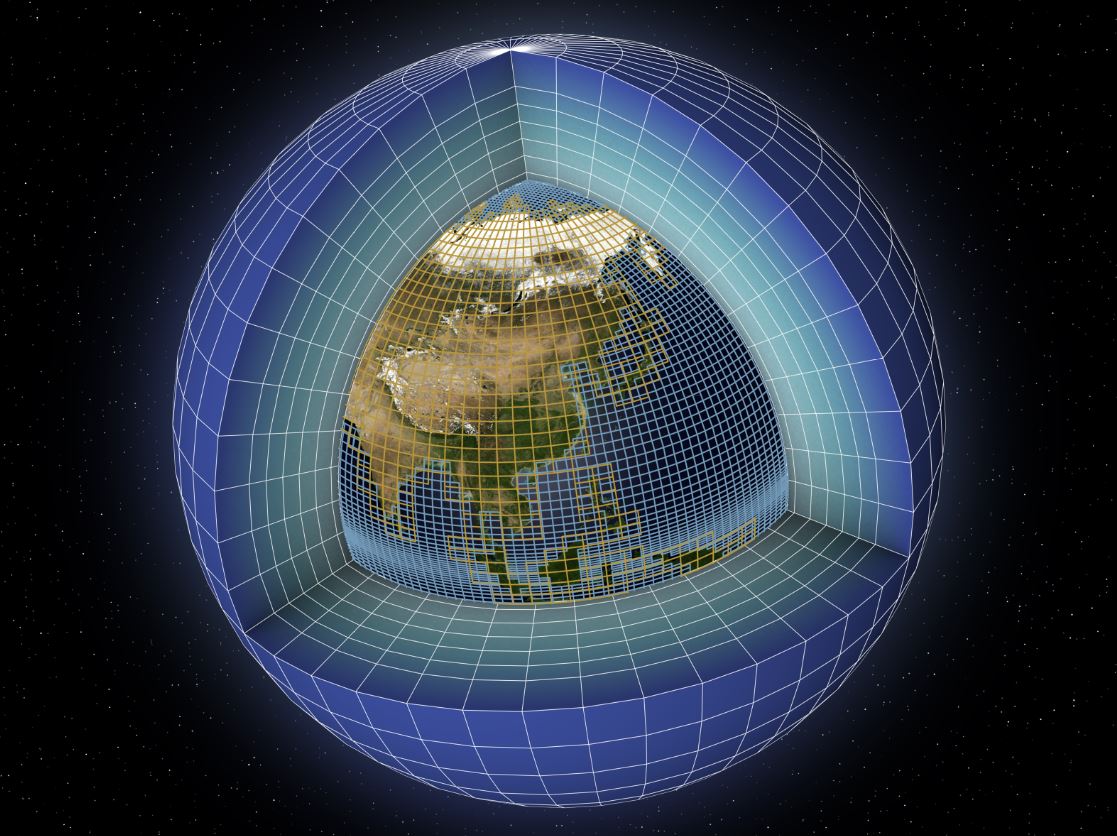

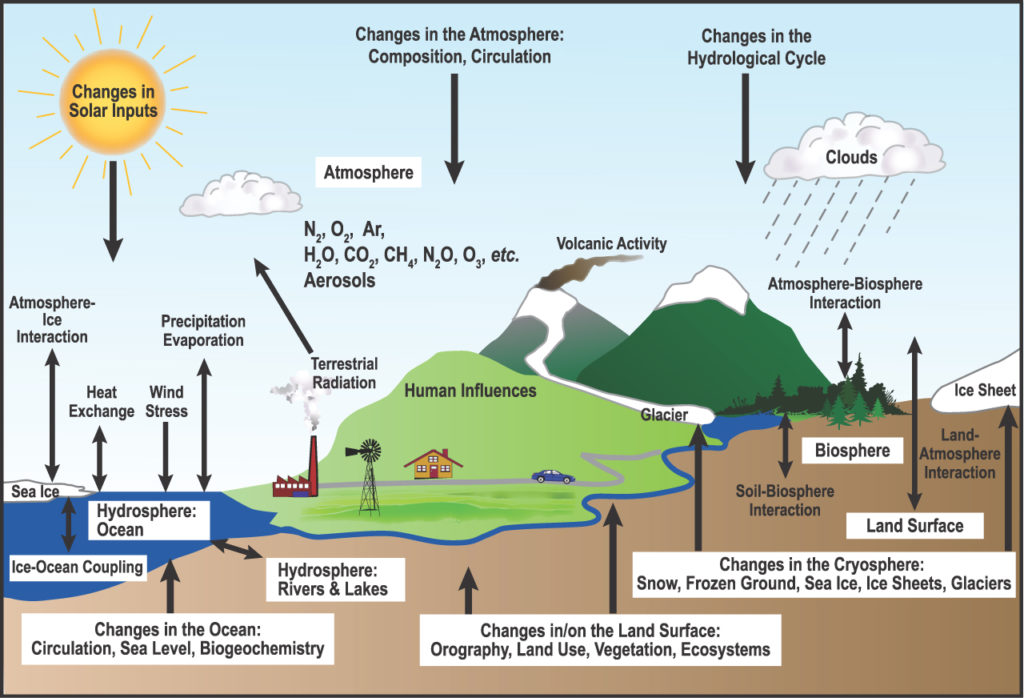

The IPCC defines climate models as “highly sophisticated computer programmes that encompass our understanding of the climate system and simulate, with as much fidelity as possible, the complex interactions between the atmosphere, the ocean, the land surface, snow and ice, the global ecosystem and various chemical and biological processes.atmosphere, the ocean, the land surface, snow and ice, the global ecosystem and various chemical and biological processes.“.

The Earth’s energy balance between these four components is key to any long-term climate projection. The main components of the climate system treated in a climate model are the following:

- The atmospheric component, which simulates clouds and aerosols, and plays an important role in the movement of heat and water around the globe.

- The land surface component, which simulates surface features such as vegetation, snow cover, soil water, rivers and carbon storage.

- The ocean component, which simulates the movement and mixing of streams, and biogeochemistry, since the ocean is the dominant reservoir of heat and carbon in the climate system.

- The sea ice component, which modulates the absorption of solar radiation and the air-sea exchange of heat and water.

Climate model results and projections provide essential information to better inform decisions of national, regional and local importance related to climate and climate statistics, such as water resource management, agriculture, transport, urban planning, etc. Whether to help scientists better understand past climates or to make projections for this century or the next, models are an essential tool for understanding and simulating the Earth’s climate.

It took decades of innovation to make this possible, and with something slightly bigger than a laptop…

Evolution and computational power of climate models

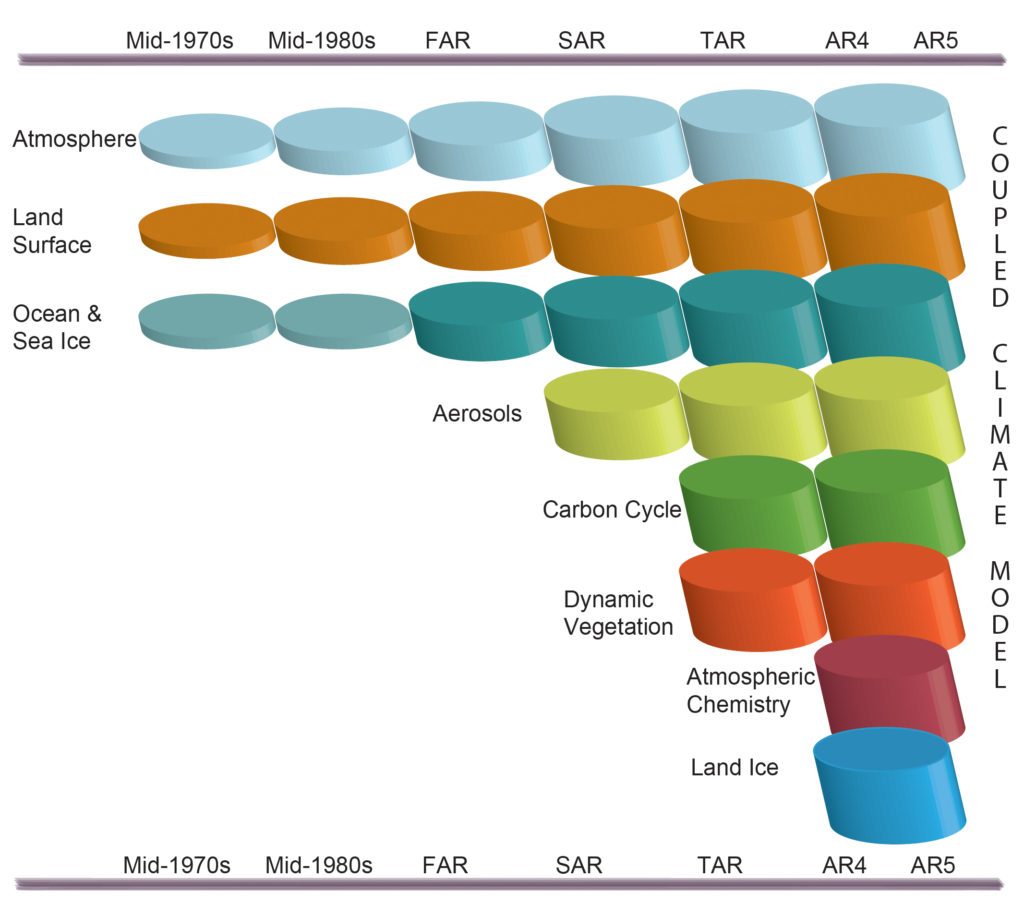

The complexity of climate models has considerably increased since 1990 and the first IPCC assessment report. Indeed, other representations of physical processes have been added over time, such as the inclusion of different types of clouds, interactions between land surfaces, the representation of the global carbon and sulphur cycles, etc.

The figure below shows the development of climate models over the past 35 years and how the different components have been coupled into comprehensive climate models over time. In each aspect (e.g. the atmosphere, which includes a wide range of atmospheric processes), the complexity and range of processes has increased over time (illustrated by increasing cylinders).

It should be noted that, at the same time, the horizontal and vertical resolution has increased considerably, e.g. for spectral models from T21L9 (horizontal resolution of about 500 km and 9 vertical levels) in the 1970s to T95L95 (horizontal resolution of about 100 km and 95 vertical levels) at the present time, and that ensembles with at least three independent experiments can now be considered standard

Source : https://www.ipcc.ch/site/assets/uploads/2018/02/Fig1-13-1024×904.jpg

The figure below shows, in a more pictorial way, how over the decades more and more climate processes have been incorporated into global models, from the mid-1970s until the IPCC Fourth Assessment Report (‘AR4’), published in 2007:

The complexity of the climate system and its many interactions means that computer modelling is the only way to project into the past and even the future. In the introduction, we asked whether a computer with the size of a tennis court was needed to run a climate model… Well, it’s true!

Trillions (and more!) of calculations per second

We have seen that meteorology and climatology have a lot in common. In a way, a climate model produces the same thing as a weather model, but the result, instead of being for the next few hours, will be for decades to come. In the first case, the initial state is of primary importance, in the second case none of the components of the climate system should be omitted as described above.

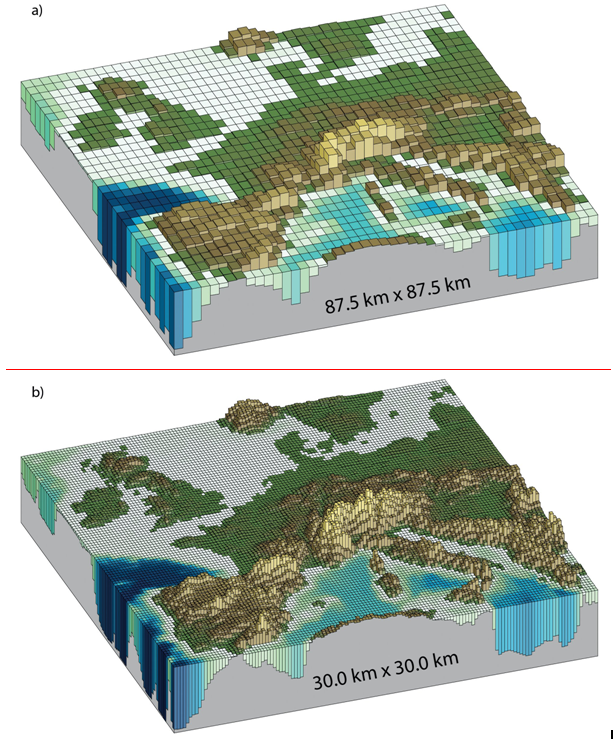

The models describing the calculations to be carried out for a climate simulation are made up of many subroutines totalling several hundred thousand (or even millions) of lines of code. The surface layers of the globe are divided into grids (latitude, longitude, altitude or depth). Time itself is broken down into elementary steps of 15 to 30 minutes duration. For each point of the mesh, time step by time step, these programs calculate the evolution of the different state variables (temperature, pressure, etc.) of each component of the climate system.

Carrying out all these calculations in a reasonable amount of time requires significant computing resources that can be found in national or European computing centres accessible to academic research (thanks to GENCI for France) or in dedicated centres. For this reason, the world’s leading weather computing centres are equipped with the most powerful computers. As an example, Météo France’s computing resources have just quadrupled in 5 years.

This video details what a climate model is and the importance of computing resources: https://vesg.ipsl.upmc.fr/thredds/fileServer/IPSLFS/brocksce/dev_comm/animation/ipsl_full_english_music.mov

How exactly does it work?

Today’s computers, consisting of a large number of processors (computing cores) working in parallel, make it possible to simulate climate change over periods ranging from a few months to several thousand years. Due to the complexity of the climate system and the limits of computing power, a model cannot calculate all these processes for every cubic metre of the climate system. Instead, a climate model divides the Earth into a series of boxes or ‘grid cells’. A global model can have dozens of layers on the height and depth of the atmosphere and oceans.

Source : Fig. 1-14 AR5 GIEC/IPCC

Climate studies often require modelling the climate over many years in order to understand the physical mechanisms that govern its variability and to estimate its statistical properties. For example, with today’s computing resources, it takes almost a whole year to carry out a 2000-year simulation with a mesh size of around 100 km (using several thousand computing cores simultaneously).

Choices must therefore be made between different options, particularly in relation to available IT resources. The choice is made according to the field of interest (climate change, seasonal to decadal forecasting, process studies, long paleoclimate simulations, etc.), which is a necessary compromise for choosing the level of detail of the simulated climate.

Are the models better today?

As they take more and more elements into account, it is legitimate to consider that today’s climate models are ‘better’ than the climate models of the 1990s. Advances in computing have also helped greatly: computing power is 1 million times greater (6 orders of magnitude) than in the 1990s. Today we can simulate 10 years per day, which would have been impossible 30 years ago with more recent models.

The fact that we have much more data than before is also a real plus. The computer programme takes into account our understanding of the phenomena but also external forcings (GHG levels, sunshine, volcanic eruptions, etc.). The observations made directly in the field or indirectly have themselves enabled us to understand the phenomena. For example, we send satellites to observe the atmosphere, cloud cover, precipitation, oceans or polar caps, balloons with sensors to analyse the atmosphere, and on a daily basis thousands of ARGO floats provide us with information on a whole range of marine parameters. Indirectly, all these data give us information about the climate and allow us to enrich the models.

However, as always, it is a little more complicated than that. The IPCC states in its latest report in 2014:

Each piece of added complexity, while intended to improve one aspect of the simulated climate, also introduces new sources of possible error (e.g. via uncertain parameters) and new interactions between model components which may, if only temporarily, degrade a model’s simulation of other aspects of the climate system.

Modelling variability is not an easy task: it also results from forcings external to the system, whether natural (such as solar variability or volcanic activity) or anthropogenic (linked to human activity). This can be seen in our article on radiative forcing. But to the extent that we have a better understanding of various climate processes and a better representation of these processes in the models, we can consider that the models are improving.

How do we know that the climate models are relevant?

This section aims to address two common criticisms of climate deniers. Firstly, the “models are untested” and secondly “they have always been wrong until now“. This is wrong in both cases.

“Climate models are not tested

The assessment of the ability of models to represent the different characteristics of the climate consists of comparing the results of a simulation with the different observations available. The methods used range from simple comparisons of mean and variability maps (temperature, rainfall, etc.) to more sophisticated estimates of model-data agreement using complex statistical methods.

These more advanced methods make it possible to give an objective measurement, taking into account the uncertainties of both the observations and the simulations, in particular those linked to limited time sampling. Each component of a climate model (ocean, atmosphere, sea ice, vegetation, etc.) is first validated separately before being integrated into the complete system.

This first step makes it possible to judge the intrinsic performance of each element. The complete, or coupled, system is then evaluated on various aspects.

Evaluation criteria for climate models

Climate models are evaluated using a number of assessment criteria to determine whether or not a model is appropriate:

- Representation of the average climate (cloud distribution, main winds, temperature, etc.)

- Ability to reproduce the seasonal characteristics of the climate in each region (simulation of tropical monsoons, Arctic freeze-up in winter…)

- Ability to simulate interannual to decadal variability observed in the ocean, atmosphere.

- Ability of models to represent recent observed climate trends (20th century global warming of 0.74°C, sea level rise of 17 cm)

- Paleoclimate simulations are also called upon to verify that we can be confident in the sensitivity of the models to reproduce a significant change in climate, as estimated from the climate record (ice cores, sediment, etc.) when external forcings change. Several periods of the past are thus examined.

Not tested… really?

After two years of intensive evaluation by the French teams, the French models (IPSL and CNRM-Cerfacs), like the other international models, have been independently examined in the last two phases of the International Comparison of Current, Past and Future Climate Simulations project (CMIP5 in 2010-2012 and CMIP6 in 2017-2019*) by teams from all over the world who analyse all the CMIP simulations made available by the different teams. Each of these studies focuses on a particular aspect of the climate system and provides an objective, multi-faceted assessment of these models.

Because of the complexity and diversity of the physical, dynamic, chemical and biological processes involved, researchers from a wide range of backgrounds and expertise contribute to the modelling of the climate system, a truly collective effort. In general, each model is tested and retested, including simulations of the recent period, simulations of the past climate (Holocene or last glacial maximum), idealized simulations (1% increase per year in CO2 in the atmosphere, a sudden increase of a factor of 4 in the concentration of CO2 in the atmosphere…) and climate projections according to several scenarios.

To say that the models are not tested is therefore false : their robustness relies in particular on the fact that they are tested, validated and evaluated over several years and by several international teams.

“they have always been wrong until now“

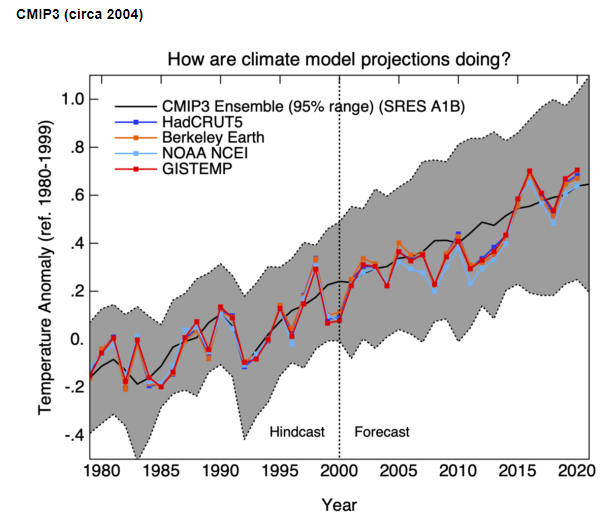

The second argument of the climate deniers is that “the models have always been wrong”. This is wrong again.

Below are the global temperature projections made in 2000 for the period 2000-2020 with the climate model versions of the time, (synthesised as part of phase 3 of the CMIP project, done in preparation for the IPCC Fourth Assessment Report). Clearly, the forecasts made 20 years ago are in line with what has actually been observed. And again, we have seen that with the improvement of technical means, the models have further improved in 20 years and have therefore increased their precision… unfortunately, there is no one more blind than a person who does not wish to see.

source : https://www.realclimate.org/index.php/climate-model-projections-compared-to-observations/

More broadly, in an article presenting the climate models published since 1973, we learn that scientists have generally been quite accurate in predicting future warming, future at the time. They all show results that are reasonably close to what actually happened, especially when differences between predicted and actual CO2 concentrations and other climate forcings are taken into account.

Different models, different results… is one climate model better than the others?

The models are also often criticised for having different results, that there is “variability between +1°C and +5°C between models”, and that this is proof that they are not reliable. Once again, this is not true. In addition to the different models with similar warming behaviour, there are differences in the scenarios.

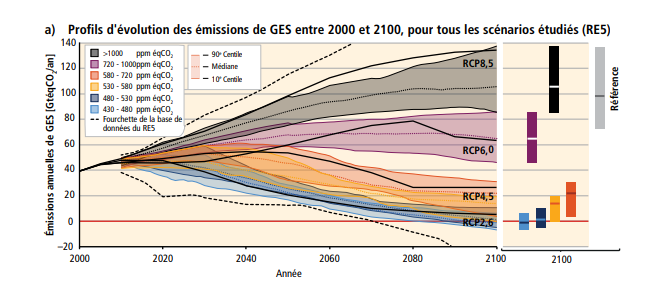

Indeed, the results depend on the greenhouse gas emissions that we (humans) will emit in the coming decades. Tes scénarios [gros raccourci] reflect how much the temperature will rise if we increase the greenhouse gas concentration by ‘so many ppm’. (Put more simply, the more you fill the bath (with GHGs), the hotter it gets). We therefore have several scenarios: RCP2.6 (increase in radiative forcing of +2.6 W/m²) for the most ambitious scenario, RCP4.5, RCP6.0 and finally RCP8.5 “do whatever you want” scenario:

Scientists tell us what could happen if we increase our greenhouse gas emissions and as such make assumptions. This is their job. Taking appropriate decisions and measures is a matter for politicians.

Is one climate model better than another?

Report after report, the IPCC clarifies the climate models, the way they are evaluated, and their relevance. Previous reports have generally provided a fairly broad overview of model performance, showing the differences between model-calculated versions of various climate quantities and the corresponding observational estimates.

Inevitably, some models perform better than others for certain climate variables. But no one model is clearly ‘the best‘.

Uncertainties and limitations

Despite the progress made, scientific uncertainty about the details of several processes remains. Climate models generally have great difficulty in satisfactorily representing certain types of clouds in the atmosphere, and in particular low clouds that have little vertical extent.

It is now well established that the uncertainties about climate change in the 21st century are mainly linked to the representation of all cloud processes and that efforts must be made, among other things, on this subject. If there are differences in the models, it is because there is progress to be made in the modelling of certain processes: in particular, more measurements and more observations are needed. The most important thing to remember is that these uncertainties are known, estimated, and that scientific research aims to reduce them.

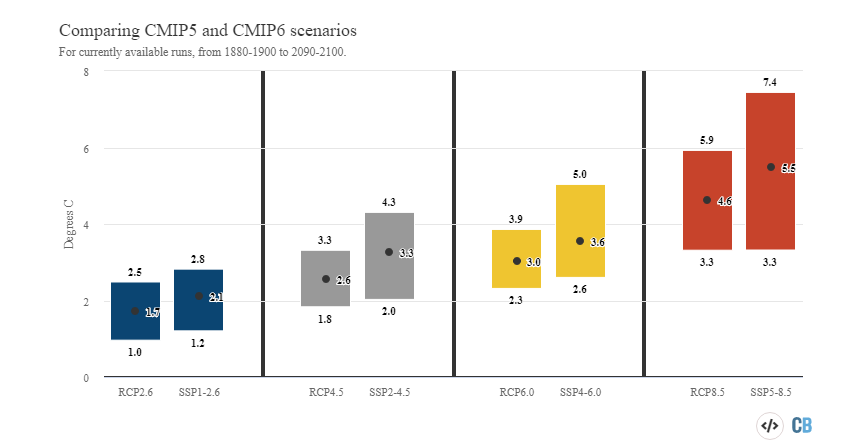

As an example, this figure shows the different levels of warming (the lowest, the highest and the average of all models) according to 4 scenarios for the results of the simulations of phases 5 and 6 of the CMIP project, simulations made at 7-year intervals by several international groups.

The French and international community has worked hard in recent years to improve the representation of different cloud types. New validation techniques using satellite data, essential for climate studies, have been proposed to sample the characteristics of the different types of clouds simulated in a model correctly.

+30 000 SONT DÉJÀ INSCRITS

newsletter

Une alerte pour chaque article mis en ligne, et une lettre hebdo chaque vendredi, avec un condensé de la semaine, des infographies, nos recos culturelles et des exclusivités.

Future climate models: what prospects for improvement?

Before concluding, there is some good news: there are several possible areas of improvement for climate models.

Firstly, simulation has become more professional: the experience of researchers, coupled with a computing power that has been increased by more than a million in 30 years, has enabled the most recent climate models to be better than their predecessors. This is how research works: it builds on what already exists and seeks to improve it.

Discussions are underway on how to run climate models on the latest generation of supercomputers (massively parallel, based on low-cost, high-performance computing cores) such as those planned in the EuroHPC project. The future increase in computing resources will make it possible, provided that the necessary modifications are made to our codes, either to carry out these simulations more quickly, or to take into account more precisely certain physical phenomena or to introduce new ones (evolution of the ice cap, wave heights, etc.), or to refine the mesh (typically 20 km instead of the current 100 km), or to better estimate the envelope of results by multiplying the simulations carried out.

Researchers working on climate models are also working to improve a very important point: the sobriety of the calculations made by the models. Indeed, the energy consumption of these supercomputers is not neutral, and with a view to carbon neutrality, this subject should not be avoided. As a reminder, this carbon footprint is part of public services, and is therefore charged to all French people. If you want to know more, you can look at what Labos 1point5 is doing, a collective of members from the academic world with a common goal: to better understand and reduce the impact of scientific research activities on the environment, in particular on the climate.

The last word

- Climate models are important simulation tools for improving our understanding of climate behaviour on seasonal, annual, decadal and centennial time scales. They help determine the extent to which observed changes in climate may be due to natural variability, human activity or a combination of both.

- They are defined as “highly sophisticated computer programs that encompass our understanding of the climate system and simulate, with as much fidelity as possible, the complex interactions between the atmosphere, the ocean, the land surface, snow and ice, the global ecosystem and various chemical and biological processes“.

- Today’s models reflect a better understanding of how climate processes work – an understanding that has followed ongoing research and analysis, as well as new and improved observations.

- To say that the models are not tested and validated is wrong: their robustness relies in particular on the fact that they are tested and evaluated over several years and by several international teams.

- Some models perform better than others for certain climate variables. No single model is clearly “the best”, while the multi-model average is the best.

- Despite the progress made, scientific uncertainty about the details of several processes (such as certain types of clouds) remains.

- Research funding is important to improve the quality of climate models, while maintaining the objective of sobriety.

Thumbnail credit : © Animea, F. Durillon pour le LSCE / CEA / IPSL